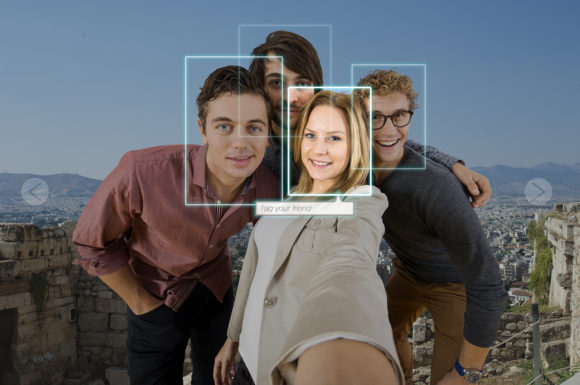

The European Union has accepted there is no escape from facial recognition, but is seeking to ensure any roll-out that includes U.S. and Chinese players will abide by European values like strict personal privacy.

Facial recognition has emerged as a hot-button issue as the EU prepares to outline its plans to regulate artificial intelligence next month. Privacy advocates are urging legislation to prevent abuses, while law enforcement is warning against banning a tool that can make societies safer.

The technology is used in a number of contexts — from unlocking smartphones to border control in airports to finding missing children. It’s a potentially powerful tool that could let law enforcement quickly sweep large crowds for criminals. But, aside from costs to privacy, it’s often inaccurate, especially for women and people with darker skin.

“This is a privacy risk on a totally new scale,” said Stefan Heumann, co-director of think tank Stiftung Neue Verantwortung in Berlin. “Anonymity in public spaces will cease to exist.”

So far, the U.S. and China dominate the industry. Hangzhou Hikvision Digital Technology Co. and Zhejiang Dahua Technology Co. control one-third of the global market for video surveillance, according to a report by Deutsche Bank AG, even keeping watch over London’s subway system.

In a January draft of the upcoming paper on AI, the EU’s executive body said any new regulations would target high-risk applications, such as predictive policing and biometric identification systems. All actors – based both within the EU and abroad – would have to abide by the rules if they operate in Europe.

Companies and organizations could be required to hand over documentation of a system’s data sets, programming and accuracy for inspection before deployment. If they can’t guarantee the facial recognition technology was developed in accordance with European values, such as respect for privacy, companies would have to retrain their systems in Europe with European data sets, the document said.

The EU is due to unveil a final version of the paper in mid-February. An earlier text suggested banning facial recognition in public spaces for several years, but the provision wasn’t among the three policy options officials recommended that the commission pursue. EU officials say the option is unlikely to make it into the final version.

“The starting point is that you will have to be cautious in the way that you use it,” Margrethe Vestager, the EU’s digital czar, said of the EU approach on facial recognition at a hearing with European lawmakers on Monday. “Otherwise, fundamental values will be very difficult to uphold.”

Meanwhile, EU data protection authorities on Thursday sounded the alarm over its unfettered use, urging companies and agencies to consider “less intrusive” tools.

The issue around facial recognition is at the heart of a long-running debate — privacy advocates butting heads with law enforcement over authorities’ access to personal information. That’s been made more complicated by high rates of inaccuracies, which have been introduced by the systems and the quality of matching photos.

Women and people with darker skin tones are particularly susceptible to false positives. A 2018 study from MIT found that commercially available facial-analysis programs were inaccurate 35% of the time for darker skinned women.

France’s government has come under fire over its plans to test and implement facial recognition surveillance in train stations and other public spaces. The U.K., which exited the EU on Friday, is deploying the cameras in London, a step that human-rights groups have called “dangerous and sinister.”

“In society and in police work we are permanently confronted with errors, but suddenly with facial recognition there’s zero tolerance,” Wim Liekens, chief information officer of the Belgian police, said at the Computers, Privacy and Data Protection conference in Brussels last week.

Liekens called for guidance around the use of facial recognition, but urged against banning it entirely adding, “it is in fact also criminal” to withhold new, innovative tools from police.

The tech industry, whose executives used the World Economic Forum in Davos to warn against the dangers of unchecked AI, has come out in support of some controls. Microsoft Corp.’s Vice President of EU Government Affairs, John Frank, said technology providers should be required to make their systems auditable so that third parties can test them for accuracy.

“I don’t think you can put this genie back in the bottle,” Frank said at the Brussels conference. “You can regulate it, though.”

–With assistance from Aoife White and Stephanie Bodoni.

Topics Legislation Europe Tech Law Enforcement

Was this article valuable?

Here are more articles you may enjoy.

Travelers: Vendor Issues Over Half of Wedding Insurance Claims in 2025

Travelers: Vendor Issues Over Half of Wedding Insurance Claims in 2025  Warmer World Means Bigger Hail and More Damage, Study Finds

Warmer World Means Bigger Hail and More Damage, Study Finds  Helicopter Crash in Georgia Kills Groom, Pilot, Hours After Huge Wedding Celebration

Helicopter Crash in Georgia Kills Groom, Pilot, Hours After Huge Wedding Celebration  Entrepreneur’s Suit Says My Safe Florida Home Hurricane Shutters Are Fire Hazards

Entrepreneur’s Suit Says My Safe Florida Home Hurricane Shutters Are Fire Hazards